This is actually an essential dimension when talking about changing the way companies build software. They can pick the best automation tools available or hire the best market consultants to built their architecture, but if cultural aspects aren't improved, they may wont ship software as they expect. Today I'm gonna talk about one these cultural aspects, the Silos.

The Traditional Division

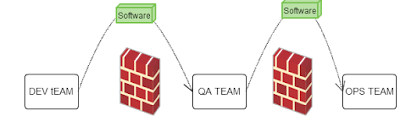

It's very commons see companies splitting up teams by their skills. A traditional division that follows this idea would look like that:

On this model, the software creation will be supported by a series of specialised teams. These teams will "somehow" be talking to each other in order to build a solution. Using this structure, companies can have specialists on each that will lead people, ensuring issues/solutions are addressed properly. This structure can change a bit depending on the case. There are cases where companies nominate people to supervision (manage) to whole pipeline. They can be responsible by make everybody talk, that environments are delivered on schedule, issues are addressed by the right people/groups, etc.

Software has been delivered on top of this model during years. But we're living in a different era now and there are some scenarios this model does not handle well.

The Software Complexity

- The data volume systems need to handle is way bigger than before. Architectural decisions need to take place in order to build systems that met business expectations. Such decisions can even change the way business sells their products to Its consumers.

- The decision of moving to the cloud or not is today a strategic business decision not from some geek on the ops area.

- Solutions needs to support traffic growth in terms of seconds. Systems need to be elastic, but architectural decisions can make it impossible to achieve. Ops and arch teams need to be on the same page here before take any architectural decision.

- How to test a solution with the characteristics as highlighted before? QA team will need special skills.

Teams needs to be on the same page since the beginning here. Even small decisions can affect everybody's and make the release process slower release after release as far application grows. What happens with the traditional model is that people tend to ignore the software complexity as whole and focus the area where they are experts. People can workaround this issue and achieve the necessary coordination even on the traditional model, BTW, It is gonna demand more effort from everybody involved.

The complexity is well handled when people understand the system as whole. Adding barriers between teams does not help achieve this goal.

The Barriers Between Teams

As more barriers are created between business and production less agile the development cycle will be. Does not matter how many experts are on each Silo or how many automation tools they implement, The barriers between them will be always something to improve. By removing Silos, people are able to see the system as whole rather than only their "working-area". The real benefits became when system is optimised as whole instead of specific parts.

.png)